By Dimi Sandu, Head of Solutions Engineering (EMEA)

Quality of Service is the application of tools and mechanisms to benchmark and control the performance of computer networks and applications, critical inside an enterprise environment.

In our previous article, on the topic of SD-WAN, we described how, with the widespread adoption of public connectivity (such as broadband and LTE/5G), modern routers have multiple paths to communicate with each other. And, at any given point, they have visibility of the circuit performance, allowing them to make real-time decisions on routing sensitive traffic, like multimedia (this process is called performance-based routing).

To quantify the quality of transmission, an admin will look at 4 metrics:

- Bandwidth (the speed of the link, see a detailed article here about bandwidth)

- Loss (the percentage of data packets lost)

- Delay (the time it takes for the packet to reach the destination)

- Jitter (the variation of delay between packets in the same data stream)

Data Loss

Loss occurs during bursts or high volumes of traffic, with each router in the path running the risk of getting overloaded. The device will have a buffer, holding packets that require processing (before a forwarding decision is made), but if that is filled the router has no other option than to drop any incoming communication before the buffer is clear.

If a loss occurs, the destination device can ask the source for the missing packets (TCP retransmission), but, in the case of real-time applications (such as VoIP or video conferencing), there is no time for the process to occur, resulting in pixelation and lost images/sounds.

Data Transmission Delay

Queuing in the buffer of a router is not the only factor affecting the delay. There is also a processing delay to consider, or the amount of time the device needs to process the packet and figure out where it needs to go.

Additionally, there is serialization delay (the time taken for the router to send the data onto the interface of transmission), and the propagation delay (how long it takes for the data to cross a medium until it reaches the next hop, and the process happens all over again).

Jitter

Jitter is also important when considering real-time applications, and this goes back to the point that the destination does not have time to ask for a retransmit. For example, in the case of a videoconferencing application experiencing jitter, it will (hopefully) be programmed to overcome this, by reducing its audio & video quality, thus lowering the amount of data it needs.

Classification & Marking

Before we think of implementing some QoS tools to deal with these situations, it is important to consider classification & marking. Without it, one cannot differentiate between critical (e.g. an employee running a Zoom call) vs. non-critical applications (e.g. guest Facebook traffic).

Both these processes should be done at the entry point of a network (on a router, access point, VoIP phone), and require the device performing them to analyze each packet, looking into the header fields for the required information (think of the header as the envelope of a letter). From source or destination IP, to port and even hostname, all of these can be used to classify the traffic.

For marking, the device will ‘stamp’ the header of each packet with an administrator-predefined tag, used by any subsequent routers when applying QoS policies (they will already have the information about what this packet is and its relative importance to the business, and won’t waste their time analyzing it).

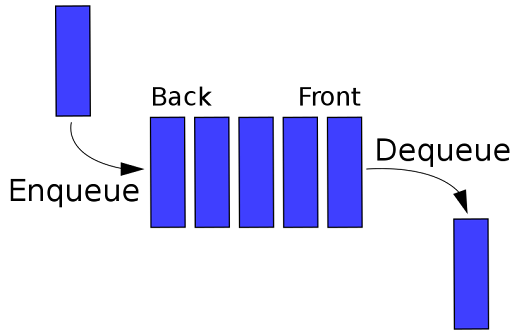

One of the main ways to protect business-critical applications is to queue them appropriately. Without any configuration, routers process packets in a FIFO (First In First Out) manner, treating that Facebook traffic in the same manner as the Zoom conference.

Diagram 1: FIFO queuing (created by Vegpuff)

Each vendor has their own custom queues, but the most common ones are:

- CBWFQ (Class Based Weighted Fair Queuing): building multiple queues, each for a class of traffic (e.g. web browsing, corporate traffic, real-time media), and servicing them disproportionately, with multiple packets in the more important queues being forwarded for each one in the low priority one (picture this as each class having its own defined proportion of the bandwidth)

- LLQ (Low Latency Queuing): defining a queue for real-time traffic, and prioritizing them over the rest; all other queues are serviced after the low latency queue is empty.

In addition, an administrator can also define traffic shaping and policing tools in order to keep the traffic level at acceptable levels (and not overloading the routers). While shaping limits the traffic flow by queuing packets above a threshold (the best analogy is of a water tap: you close it slightly for a smaller flow), policing explicitly drops them (very useful for non-important traffic, like the guest generated one, bursts of which should never impact all other network functions).

Another popular tool amongst administrators is congestion avoidance, which tries to eliminate the bottlenecks before they occur. Instead of leaving a router’s queue to be filled, resulting in loss and processing delays, the administrator can define what traffic gets dropped ahead of time, also taking into account its type. The advantage of this process is that certain hosts can also be aware of this happening, reducing the flow at the source (this is called Explicit Congestion Notification).

The techniques above come into use when a certain company/entity owns the whole infrastructure and can push the right policies and configuration to support its critical traffic. But how would this work between two locations connected via multiple SP (Service Provider) links? If one is purely using private circuits (such as MPLS), they can negotiate an SLA (Service Level Agreement) with the SP, agreeing on their internal markings (so that the provider can use them in their backbone network for similar QoS mechanisms).

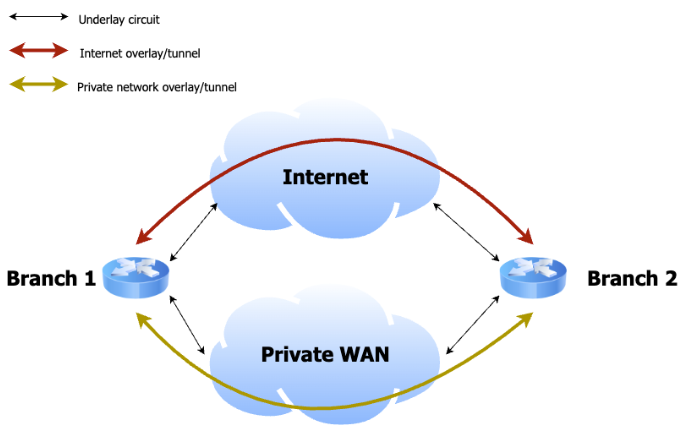

But, in the case of SD-WAN, each site is using multiple transmission links, including public ones (remember, Local Breakout is critical for cloud-based applications, shortening the overall path, thus minimizing delays and congestion). This is where two SD-WAN routers that want to communicate with each other will build encrypted tunnels with one another (read about the importance of encryption here) over each underlay circuit, and monitor it constantly with the use of probes, or by analyzing real traffic.

Both source and destination routers will communicate with each other, describing the number of packets that arrived (this defines loss), but also how long it took them to do so (this defines latency & jitter). With this critical information, they will make real-time decisions to favor one circuit over another, depending on the needs of an application (performance-based routing) and/or the rules defined by the admin (policy-based routing).

As an example, for two sites connected with an expensive private line and a cheap public circuit, the boxes can be coded to always prefer the latter for inter-site communication. But, if that degrades (which is common, as the Internet is a shared network and cannot offer an SLA), they would move real-time applications (such as a call between two VoIP phones) to the more stable private one, incurring additional costs but protecting the overall experience of the end users.

For more videos on physical security & networking, follow Dimi on his YouTube channel: https://www.youtube.com/c/DimitrieSanduTech