By Dimi Sandu, Head of Solutions Engineering (EMEA)

WANs (Wide Area Networks) connect multiple locations together, facilitating the flow of data from source to destination, composed of a variety of end devices and servers exchanging information. If you require a refresh of some of the terms and definitions in this article, please check this article here, which describes the way the WAN and multiple LANs (Local Area Networks) work together.

To understand this critical component of the infrastructure, it is important to go back in time and visualize some of the more significant changes that have occurred within computer networks.

The initial use of networks was to transfer data between a small number of computers and servers, running specialized applications (such as CRMs, emails, and website access). The cost of the technology was high, and its performance slow, but, as with everything, scale and adoption pushed the vendors to innovate, making devices and applications cheaper, faster, and more widely available.

A traditional network was characterized by multiple locations, or LANs (Local Area Networks), connected to one or a couple of major offices (such as the headquarters) via a series of private links. At that point, the Internet was not as reliable as today, making it not suitable for this inter-office transfer of data. Major service providers (e.g. AT&T, Verizon, BT, Vodafone, O2, etc.) would offer, at a high cost, private circuits (a.k.a. leased lines) built on top of their WAN networks, spanning the globe.

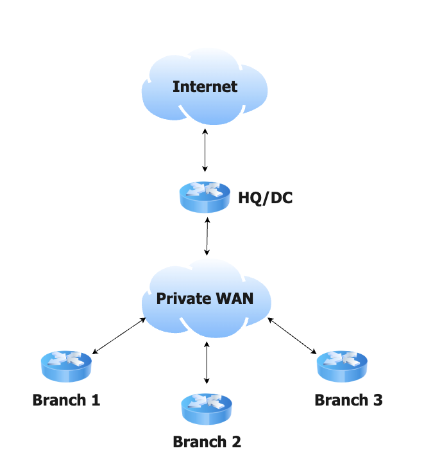

The customer had to install one or a couple of routers at each location, with redundancy becoming more important as computers and the applications within them became business critical (an article about redundancy and how to achieve it can be found here). They would facilitate the transfer of data from each office (or branch) to the HQ (where the servers reside, in the data center). If two offices had to communicate with each other, they would do so via the HQ, and vice versa. This type of architecture started to be referred to as hub & spoke.

Diagram 1: a simplified example of hub & spoke architecture

And, as Internet traffic was not so common, companies would install a small Internet line at their HQ (called the breakout), filtering traffic and securing it via firewalls. With very few, if any, personal devices, it was mostly used for the company’s servers to exchange information with other 3rd parties (e.g. an on-premise email server, installed in the DC, receiving and sending emails outside the organization).

If all of the above seems very different from what you are experiencing now, you are correct. Many things have changed, forcing organizations to reconsider the way their networks are set up, in order for them not to become bottlenecks, such as:

1) The proliferation of end devices:

- Compared to employees needing to potentially share a computer, or only some having access to one, these days anyone has on average 2 or 3 devices, used concurrently;

- In addition, employees prefer the flexibility of using different devices, mixing the ones issued by IT with their own - this is called Bring Your Own Device - BYOD);

2) Applications moving to the cloud:

- With the advent of Office365, AWS, Microsoft Azure, Zoom, etc. it made financial sense for most companies to re-deploy some, if not all their applications to the cloud, minimizing or eliminating their data centers;

- As opposed to a decade ago, a significant number of applications deal with real time exchange of data (e.g. VoIP calls, web conferences); these multimedia applications require a clean transmission network, with even minor slowdowns affecting them greatly.

This exponential growth in the number of devices and overall traffic volume puts enormous stress on the WAN lines. Furthermore, the suboptimal routing adds delay and potential issues, negatively impacting the user experience when using multimedia applications. An administrator can choose to address this in the short term by increasing the bandwidth of the circuits, but this is more of a stop-gap solution, with costs spiraling out of control and performance issues appearing further down the line.

BYOD and the move to cloud applications bring further challenges to a business, from a security perspective, as now unregulated and potentially malicious devices and applications have direct access to the LAN, bypassing the firewall. This however is a separate discussion, outside the scope of this article (read more about Next Generation Security here).

In order to address the scalability and performance issues of traditional hub & spoke architectures, built on the back of expensive private circuits, a major redesign took place, embracing not only the increased availability and low cost of the Internet, but also the launch of wireless technologies such as LTE/4G and 5G. This new technology is called SD-WAN (Software Defined Wide Area Network).

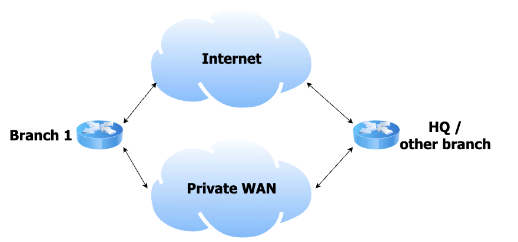

Instead of forcing all the traffic to the DC, through an expensive circuit, just for it to break out to the Internet, a branch router would be provisioned with one or multiple Internet lines, with the private circuit becoming a backup or eliminated altogether. This will streamline the traffic bound to the Internet, allowing it to ‘escape’ as soon as possible, also lowering the cost of the private WAN (the latter tends to be more expensive than a plain broadband/Internet line).

Diagram 2: A simplified connectivity diagram between a branch and another office

Further cost savings can also be achieved, without impacting the application performance, by routing the traffic between the offices directly (bypassing the HQ), using all available lines, and prioritizing the cheaper ones.

As an example, on a regular day, the inter-office traffic can use a cheap Internet connection, but if this were to degrade or fail, the routers will automatically move the business multimedia traffic to the more expensive private line, or a 4G/5G backup. This is referred to as performance based routing, and relies on the routers at each end probing all the lines, in order to have a real time understanding of the health of each circuit and the inherent needs of each application.

In addition, admins will have multiple choices for treating traffic, depending on how critical it is for the business. So, while they can choose to let the guest traffic drop in case the main Internet service goes down, they can also choose to utilize multiple circuits in parallel, to increase throughput between two locations (e.g. to facilitate fast transfer of large files). This is called policy based routing and is based on the innate ability of the routers to inspect traffic flows and identify what users and applications each is tied to (referred to as deep packet inspection/application recognition).

Besides that, another important optimization occurred, helping administrators better visualize the state of the infrastructure and push changes much faster and easier. It is related to the management of the routers themselves, with traditional networks requiring administrators to log into each manually, using CLI (Command Line Interface) code to modify the configuration or troubleshoot. Most of the interaction with the SD-WAN infrastructure happens from a central point, regardless of the number of routers, and is based on a simple UI, not requiring technicians to re-learn coding.

For more videos on physical security & networking, follow Dimi on his YouTube channel: https://www.youtube.com/c/DimitrieSanduTech