By Dimi Sandu, Head of Solutions Engineering (EMEA)

Most enterprise-grade CCTV systems allow for advanced analysis of the footage, such as people or vehicle characteristics, face search, or license plate recognition. Although each vendor offers different capabilities and may require different hardware and software licenses, they all have similar requirements when it comes to camera positioning.

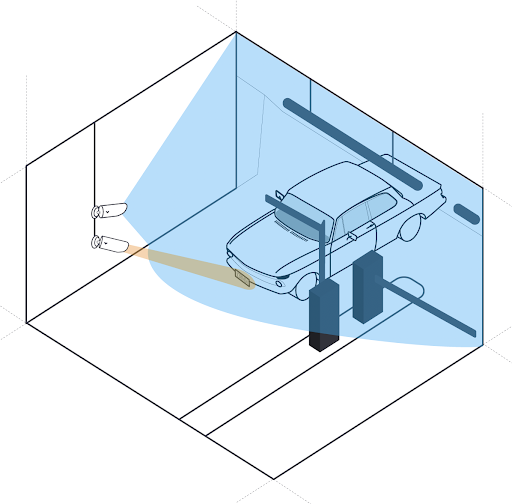

In a nutshell, the positioning and proximity of an LPR camera to the subject or object are critical and differ from a deployment intended for holistic CCTV coverage.

Proximity Requirements for LPR and Advanced Analytics

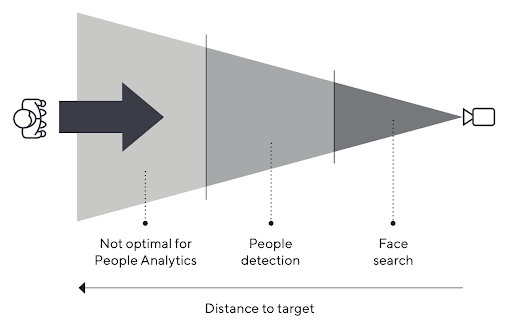

The first thing to consider is how close the camera is to the subject or object. Each vendor will list the maximum distance a certain function will work at, and exceeding this will result in events being missed and not analyzed. This is where the concept of PPF (Pixels Per Foot) or PPM (Pixels Per Meter) comes into play as a way to define the clarity with which a subject or object gets recorded, depending on the distance from them to the camera.

As seen in the above diagram, as a person is walking towards the camera, the PPF increases (more pixels are covering them from an image perspective), making their appearance clearer and the software more accurate.

And, although obvious, the installer needs to make sure that nothing can obstruct the direct line of sight from the camera to the recorded target.

Each vendor will list the PPF requirements for each of their analysis functions, and an installer must use them when carrying out a site survey. PPF can be increased either by opting for a higher-resolution camera or by utilizing optical zoom, both of which help increase the quality of a subject or object at a distance.

Camera Positioning Considerations

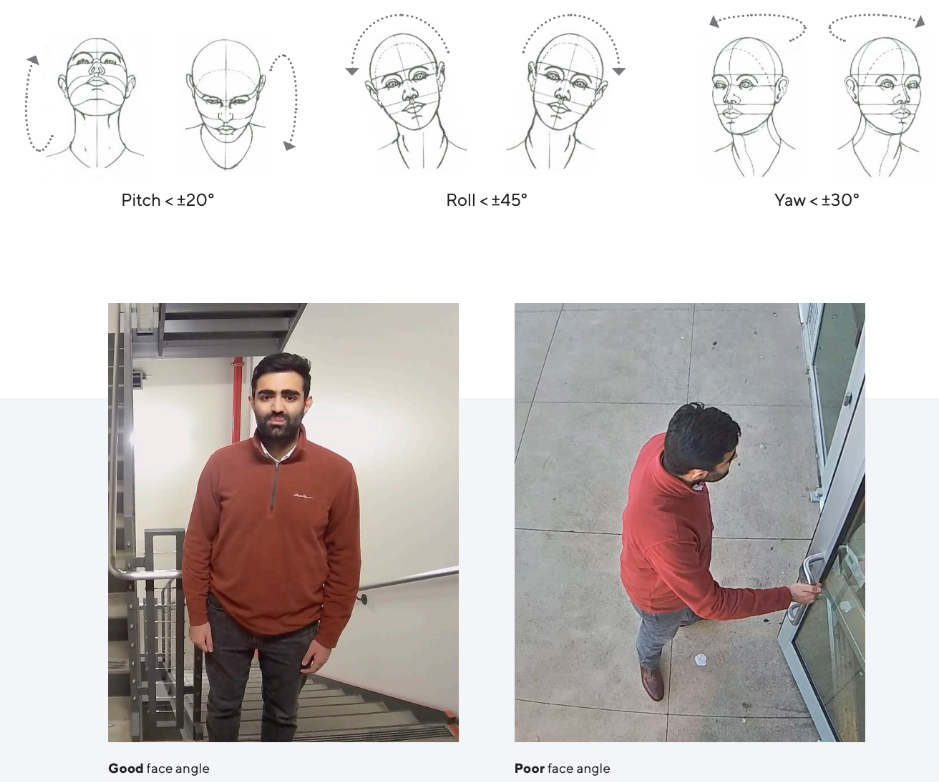

In regards to camera angle and positioning, special care needs to be taken when it comes to functions such as LPR (License Plate Recognition) or face search/matching. The recommendation is to keep the angle between the camera and the plate or face as small as possible, as bigger deviations will result in misread characters and false positives.

The most accurate way to match a face is to have the camera look straight into the face of a subject at a very close distance (e.g., phone face unlock or airport automated gates). The greater the distance or angle, the greater the probability of the algorithm not performing well.

The angle requirements are similar to LPR, with every vendor listing the maximum horizontal and vertical angles in their data sheets. And, as vehicles can travel at a much faster velocity than humans, a maximum speed should also be listed, and the installer must take care to place the LPR camera accordingly (e.g., entrances or exits or anywhere on the premise where traffic slows down).

Lighting Considerations

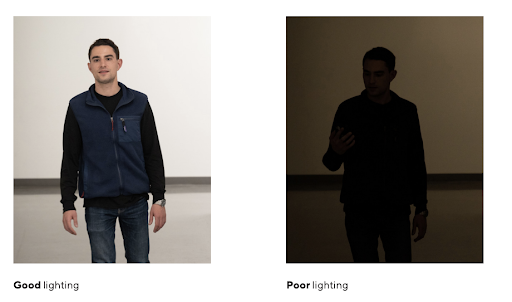

Another similarity between face search/matching and LPR is the lighting, as poor positioning can result in shadows being cast over the face or plate and negatively impact accuracy. It is important to have good lighting, together with turning on the WDR (Wide Dynamic Range) feature in scenes where there is a strong contrast between light and dark areas.

To summarize, advanced analytics require much more careful planning, regardless of the vendor you are deploying, and improper installations will lead to a great number of false positives and missed events. Besides the proximity of the camera to the subject or object, one also needs to consider the angle and impact of the lights in the area.

But, even with perfect placement of the recording device, there are always scenarios where the algorithms will fail to work accurately (e.g., two people walking behind one another, the former occluding the latter, or someone with a baseball cap and a face mask), so one cannot expect or guarantee 100% accuracy.